Beyond the Menu: How We Built a Multi-Tenant WhatsApp AI Gateway for Restaurants

Building a conversational AI gateway isn't just about connecting OpenAI to WhatsApp. It requires robust state management, multi-tenant architecture, and deep integration into legacy POS systems.

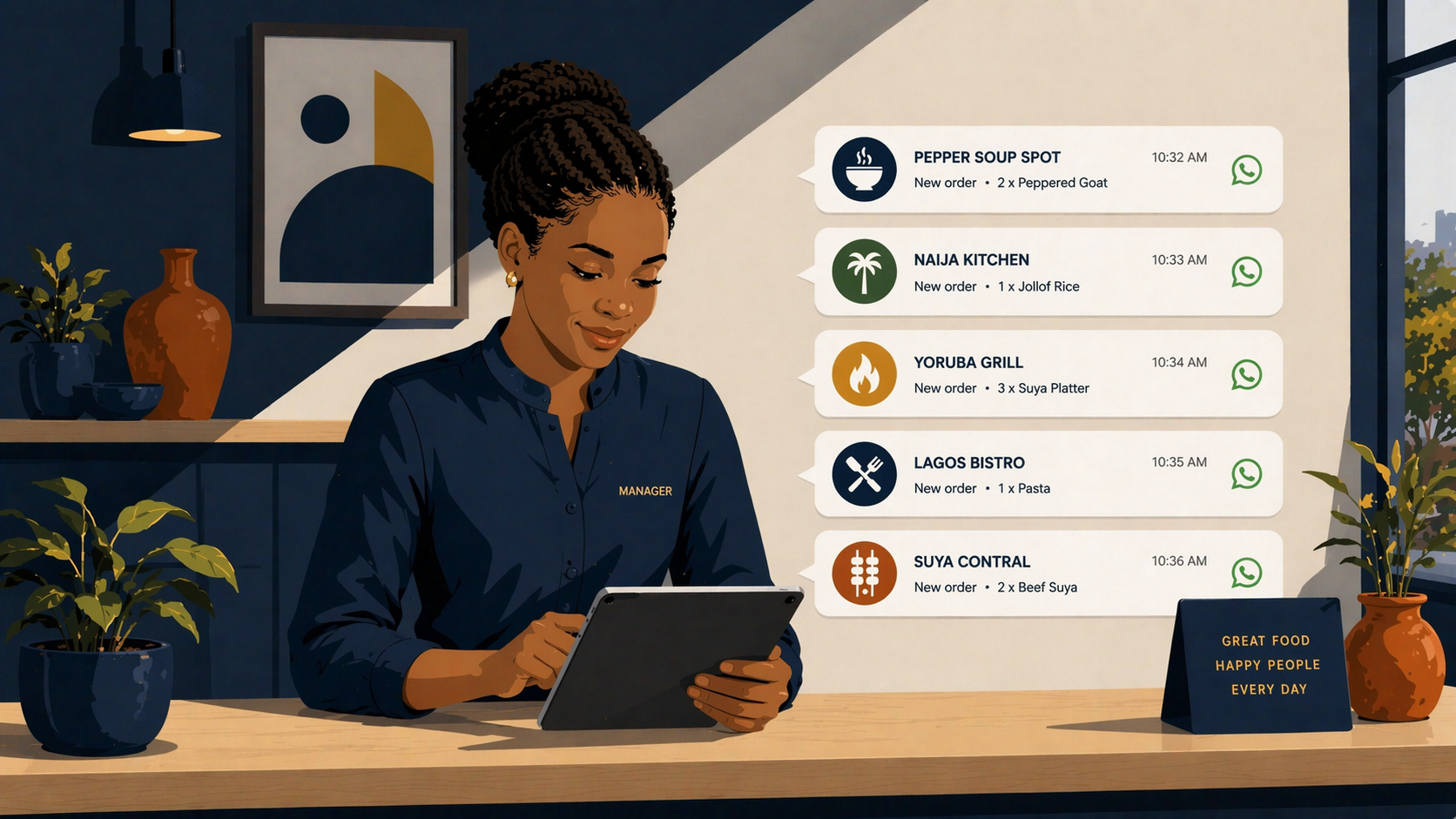

If you walk into the kitchen of any busy restaurant in Lagos right now, you will hear a very specific, anxiety-inducing sound: the simultaneous pinging of four different delivery tablets.

Glovo is ringing. Chowdeck is buzzing. Nigerian restaurants are paying up to 30% in commissions on every single order, and worse, they don't even own the customer data. If they want to tell their best customer about a new menu item, they can't. They are simply renting their own audience.

Meanwhile, the customer experience is just as fragmented. Most people don't want to download another 150MB app just to order lunch. They want to open WhatsApp, text a restaurant, say, *"Give me my usual, but no onions today,"* and have it magically arrive.

That is the holy grail of conversational commerce. But building a WhatsApp ordering bot that actually works—and doesn't make the customer want to throw their phone at the wall—is notoriously difficult.

"Customers don't speak in JSON. They use slang, make typos, change their minds mid-sentence, and ask questions that break rigid decision trees."

This is where most WhatsApp bots fail. They try to force users into typing "Press 1 for Menu". Instead, we used OpenAI not as a generic chatbot to make small talk, but as a hardcore intent-recognition engine.

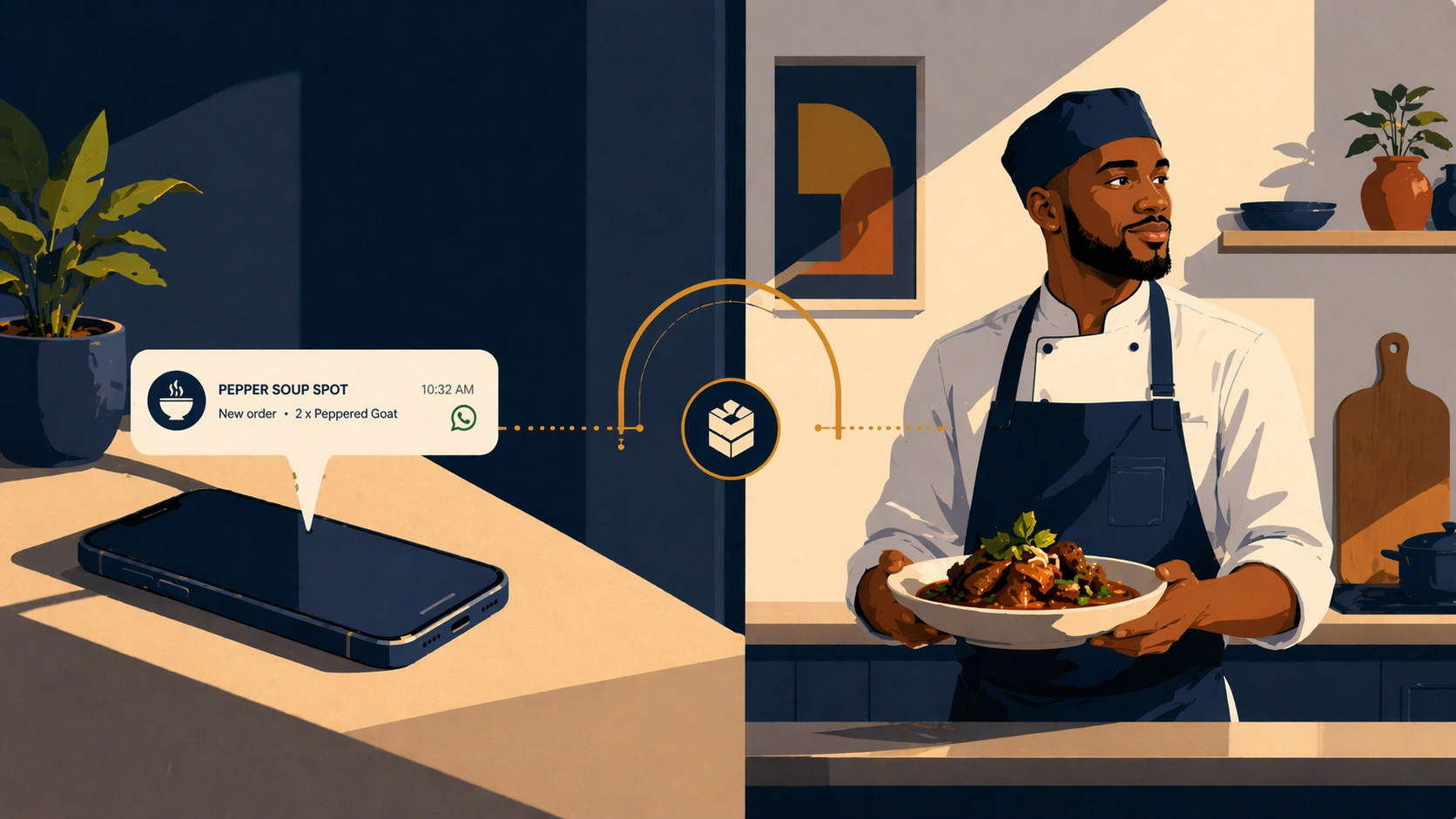

When a customer texts, *"Give me two chicken burgers, but make one without pickles, and add a large fries,"* the LLM parses that messy human text and maps it directly to the restaurant's structured menu. It uses OpenAI's function calling to extract the exact item IDs, quantities, and modifiers, outputting a clean JSON payload like `addToCart({ itemId: "chk_brg_01", quantity: 1, notes: "no pickles" })`. This structured data is what gets pushed directly into the restaurant's POS system. No human intervention required.

Recently, we built Sirvora-ai, a centralized WhatsApp messaging gateway connecting the Meta Cloud API to Sirvora-Core our multi-tenant restaurant OS. It wasn't just about plugging OpenAI into WhatsApp. It was a heavy infrastructure challenge. Here is how we built the backbone.

1. Multi-Tenancy: Routing Thousands of Messages Without Crossing Wires

When you build a centralized gateway for multiple restaurants, Meta sends all the WhatsApp messages from every single restaurant to one single webhook URL on your server. If 5,000 people are ordering from 50 different restaurants at the exact same time, how do you ensure the messages don't cross wires?

If a customer messages "Burger King," the AI needs to instantly load the Burger King menu, tone of voice, and pricing. If another customer messages "Chicken Republic" at the exact same millisecond, the context must be entirely isolated.

To solve this, we built a dynamic routing layer. The millisecond a payload hits our server from the Meta Cloud API, we inspect the destination phone number (the restaurant's WhatsApp Business Account ID). We use that ID to query our database, isolate the specific restaurant tenant, pull their unique menu, and inject their specific operating rules into the AI's system prompt on the fly. Every single message gets its own isolated, tenant-specific sandbox.

2. State Management: Why We Chose Redis

Large Language Models (LLMs) are stateless. They have the memory of a goldfish. If a customer says, *"Add a Coke to my order,"* the AI needs to know what the order actually is.

Instead of constantly polling a heavy PostgreSQL database for every single message, we used Redis to manage the session state. Every user gets a fast, in-memory session object that tracks their current conversation step (e.g., `MENU_BROWSING`, `ITEM_SELECTION`, `CHECKOUT`), their active cart, and their selected restaurant.

export interface Session {

step: ConversationStep;

cart: CartItem[];

tenantId: string | null;

selectedRestaurantSlug: string | null;

lastActivity: number;

conversationHistory: Array<{ role: "user" | "assistant"; content: string }>;

}This means the AI isn't just guessing what the user wants. It has a strict, real-time view of the user's cart, allowing it to accurately calculate totals and apply delivery fees before the user even asks.

3. The Agent Loop & Safety Gates

The biggest risk of using AI in commerce is hallucinations. You cannot allow an AI to accidentally charge a customer's card or finalize an order just because the user said, *"Yeah, that sounds good."*

To prevent this, we built a custom Agent Loop with strict "Safety Gates." We gave the AI a set of tools (functions) it can call—like `addToCart` or `checkMenu`. However, we flagged certain tools, like `checkout`, as DANGEROUS.

"If the AI attempts to call a DANGEROUS tool, our execution loop intercepts the call, blocks the AI from proceeding, and forces a hard stop."

Instead of letting the AI finalize the order, the system intercepts the request and sends a structured WhatsApp Interactive Button to the user: *"Your total is ₦15,000. Would you like me to place this order? [Yes] [No]."* The AI is never allowed to touch the money without explicit, cryptographic confirmation from the user.

Taste and Backbone

Building a truly conversational AI gateway is a perfect example of what we mean by "Taste and Backbone." The taste is the seamless, magical experience of a customer ordering dinner via a simple WhatsApp text. The backbone is the in the engineering and the strict safety gates running silently in the background.

At The Sircle Company, we build complex, high-throughput AI infrastructure like this for ambitious teams. We don't just build basic websites; we build heavy, scalable systems that solve actual operational nightmares.

Whether you are building the next food-tech aggregator, trying to bypass 30% delivery commissions, or need a custom AI gateway for your enterprise, we have the blueprints.

Know your customers. Know your business. Let's build your infrastructure.